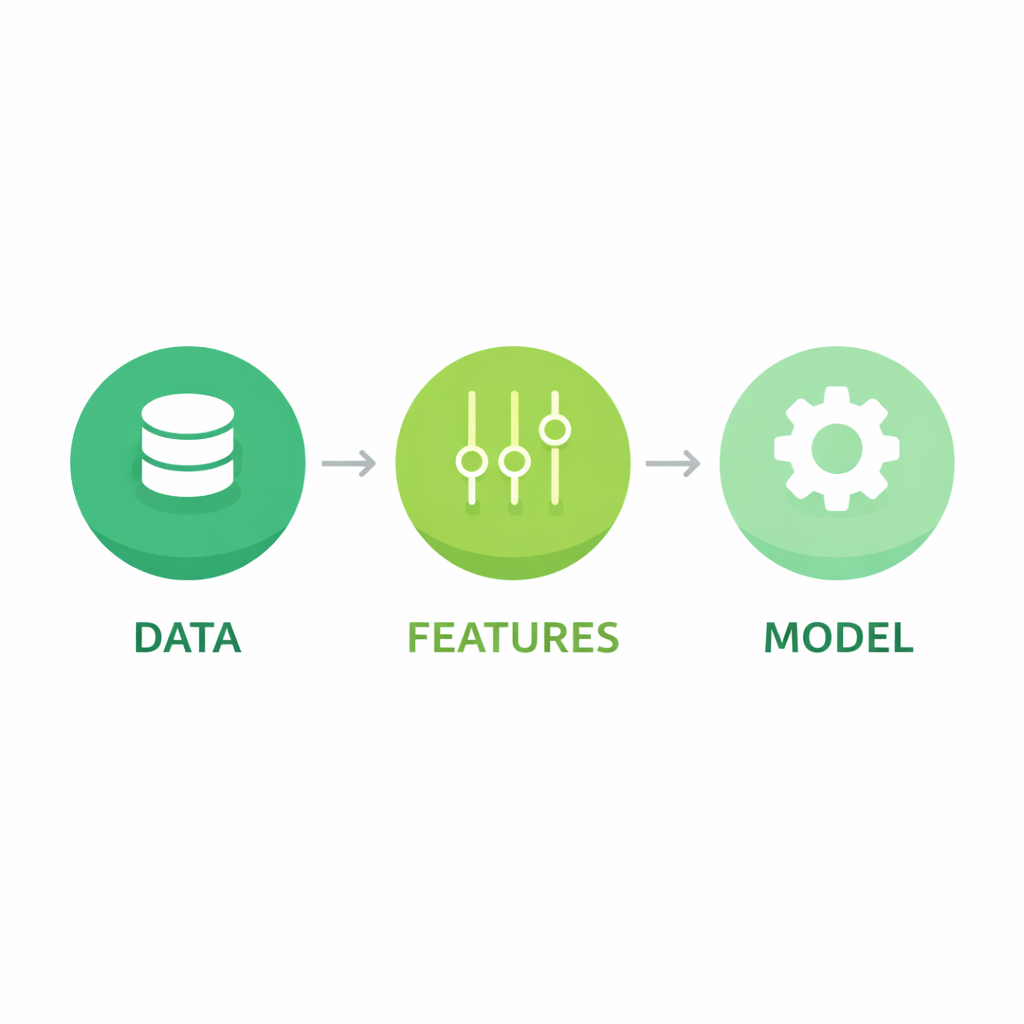

To understand how Machine Learning really works, you need to master its three essential components: data, features, and algorithms. Every model — from a simple linear regression to a deep neural network — is built on top of these three pillars.

It may sound simple, but each component hides critical decisions that determine whether your model becomes reliable or completely useless. Let’s break them down clearly and practically.

1. Data: The Foundation of Everything

Data is the fuel of Machine Learning. Without data, there is no learning. But it’s not just about having data — it’s about having the right data.

A high‑quality dataset must be:

- Sufficient: enough samples to capture the variability of the problem.

- Relevant: contains information directly related to the target you want to predict.

- Clean: free of errors, duplicates, missing values, or inconsistencies.

- Representative: reflects the real-world distribution you want to model.

Example: If you train a facial recognition model only with light‑skinned individuals, it will fail dramatically with other skin tones. That’s data bias, one of the most common causes of ML failure.

Golden rule: A model is only as good as the data it learns from.

2. Features: Translating the Real World Into Numbers

Algorithms don’t understand concepts like “a nice house” or “a loyal customer.” They only understand numbers. Features are the way we translate real‑world information into numerical representations that a model can process.

Examples:

- Predicting house prices → square footage, number of rooms, age of the property

- Detecting spam → keyword frequency, number of links, message length

- Image recognition → pixel intensity, edges, textures

- Customer churn → purchase frequency, time since last interaction

Not all features are useful. Some add valuable information; others only introduce noise.

Useful for predicting fuel consumption:

- Vehicle weight

- Engine displacement

- Aerodynamics

Not useful:

- Car color

- Owner’s name

- Serial number

This is where feature engineering comes in — the craft of creating and transforming features so the model can learn better. In many projects, feature engineering matters more than the algorithm itself.

3. The Algorithm: The Method That Learns

The algorithm is the mathematical mechanism that learns the relationship between features and the target outcome. It’s the “brain” of the model, but it cannot work without good data and meaningful features.

Common algorithms include:

- Linear Regression

- Decision Trees

- K‑Nearest Neighbors (K‑NN)

- Support Vector Machines (SVM)

- Neural Networks

Each algorithm has strengths and limitations. The right choice depends on the type of problem, the amount of data, and the complexity you need.

But here’s a truth many beginners overlook: Even the most advanced algorithm cannot fix bad data or poorly designed features.

How the Three Components Work Together

The relationship is straightforward:

- Data describes the real world

- Features represent that information in a useful way

- The algorithm learns patterns from those representations

If one component fails, the entire model collapses. Only when all three are well executed do you get strong, reliable results.

One‑Sentence Summary

A strong Machine Learning model is built on high‑quality data, well‑designed features, and an algorithm suited to the problem.

Next in the Series

In the next article, we’ll explore the full Machine Learning workflow, from data collection to model evaluation. We’ll connect these three components inside the real‑world ML pipeline.