When we train a Machine Learning model, it’s not enough to just «teach» it with some data and hope it works well. We also need to check if it can actually respond to new information. That’s where validation comes in.

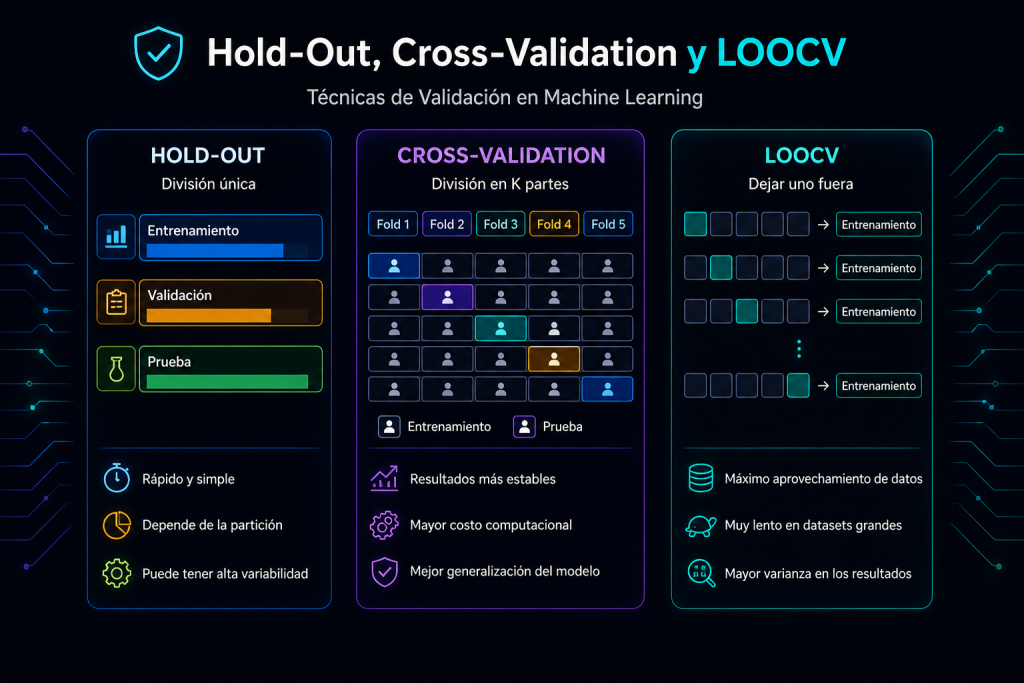

Hold-Out, Cross‑Validation, and LOOCV: Key Data Validation Techniques in Machine Learning

Choosing how to split your data isn’t a minor technical detail. It’s a decision that can directly influence whether your model learns well or just appears to work well.

In Machine Learning, good validation is key to measuring how well a model generalizes to new data. In this post we’ll look at three of the most commonly used techniques: hold-out, cross-validation and LOOCV, when to use each one, their advantages and their limitations.

1. Hold-out: splitting the data once

The simplest method consists of separating the data into two or three groups: one for training, another for validation and, in some cases, a third for final testing.

It’s a quick and easy technique to apply, which is why it’s widely used when we have plenty of data.

Advantages

- Simple to understand

- Executes quickly

- Works well when there’s lots of data

Limitations

- Results can vary depending on how the split is made

- With little data, it can be unreliable

- In imbalanced datasets, the partition can greatly affect evaluation

When to use it

When you need a quick evaluation and have enough data so the split doesn’t distort the result too much.

2. Cross-Validation: repeating the evaluation multiple times

Cross-validation seeks a more stable evaluation. Instead of splitting the data once, it divides it into several parts (folds). The model is trained and validated multiple times, changing the validation fold in each round.

This way we don’t depend on a single partition, but on several.

Advantages

- More stable results

- Reduces the impact of a «bad» partition

- Usually offers a better estimate of real performance

Limitations

- Takes longer to execute

- Can be costly if the model is heavy

- In time series requires adaptations

When to use it

When you want a more solid evaluation and can invest a bit more time.

3. LOOCV: leaving one observation out each time

LOOCV stands for Leave-One-Out Cross-Validation. The idea is simple: leave a single data point out for validation and train with all the others. Then repeat the process with each observation in the dataset.

If you have 1,000 records, the model will be trained 1,000 times.

Advantages

- Uses almost all the data for training

- Useful when the dataset is very small

- It’s a very thorough evaluation

Limitations

- Very slow

- Doesn’t scale well with lots of data

- Can generate high variance in some models

When to use it

Only when you have little data and want to squeeze the most out of each observation.

Quick comparison

| Technique | Main idea | Strong point | When to use it |

|---|---|---|---|

| Hold-out | Split once | Speed | When you have lots of data |

| Cross-Validation | Split multiple times | More stable results | When you want a reliable evaluation |

| LOOCV | Leave one out each time | Make the most of the data | When the dataset is very small |

Which one to choose?

There’s no universal technique that works best in all cases. The choice depends on the dataset size, available time and the level of confidence you need in the metrics.

Hold-out is ideal if you’re looking for speed.

Cross-validation is the most balanced option and one of the most used in practice.

LOOCV is useful when data is very scarce and you want to leverage each observation.

A bad validation strategy can make a model seem better than it really is. That’s why choosing how to evaluate your data is as important as choosing the right algorithm.

In summary

Hold-out is like doing a quick test.

Cross-validation is like repeating the test several times to be more certain.

LOOCV is the extreme version for when you have almost no data.

There’s no best option for all cases: it depends on how much data you have, the time you want to invest and how much confidence you need in the evaluation.

TL;DR

Hold-out is fast, cross-validation is more reliable and LOOCV is mainly used when there’s very little data. The best technique depends on the context, not on a single rule.